Evaluating the Impact of Uncertainty Visualization on Model Reliance

Jieqiong Zhao, Yixuan Wang, Michelle V. Mancenido, Erin K. Chiou, Ross Maciejewski

DOI: 10.1109/TVCG.2023.3251950

Room: 109

2023-10-26T00:09:00ZGMT-0600Change your timezone on the schedule page

2023-10-26T00:09:00Z

Fast forward

Full Video

Keywords

Uncertainty;model reliance;trust;human-machine collaborations

Abstract

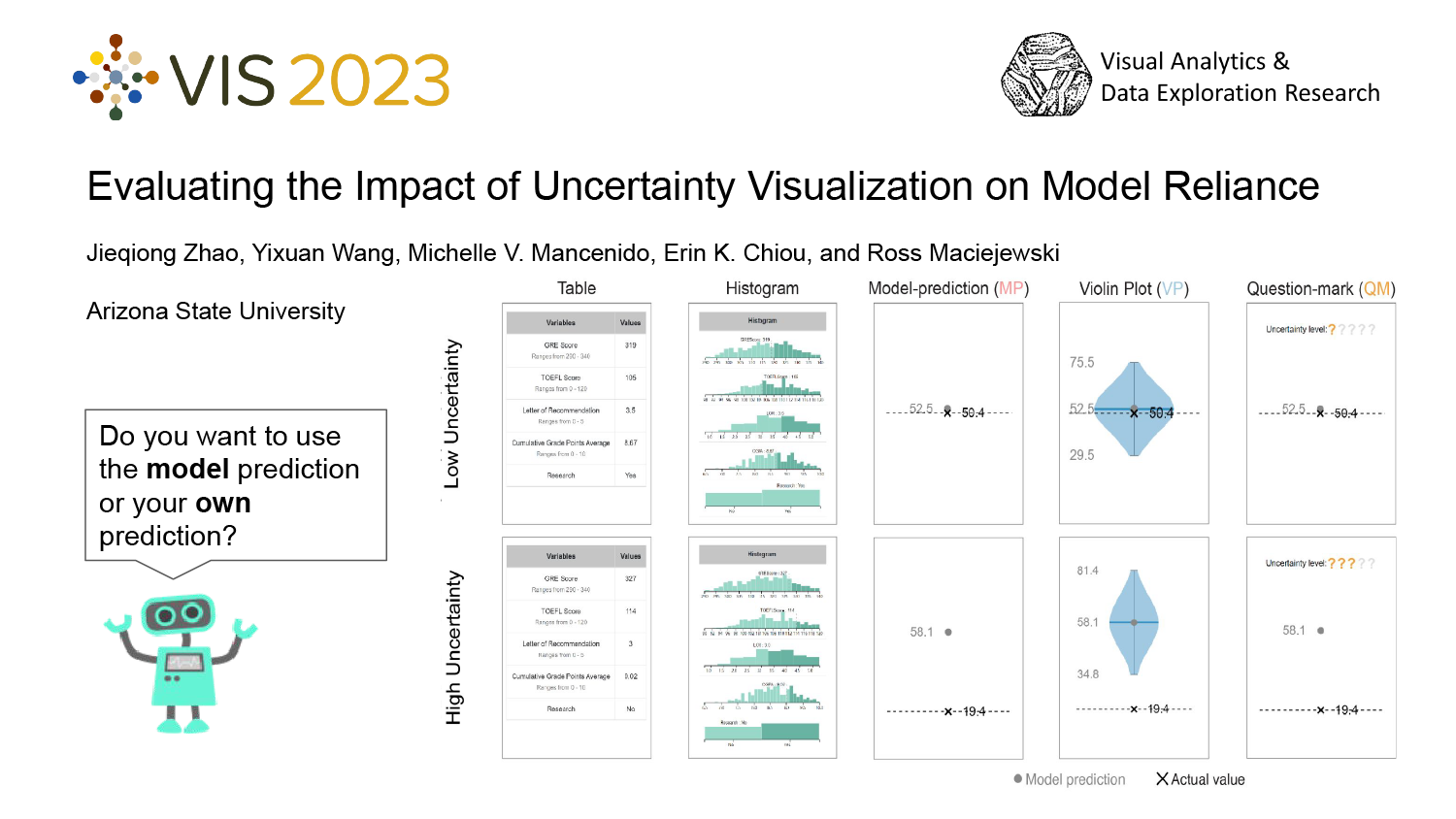

Machine learning models have gained traction as decision support tools for tasks that require processing copious amounts of data. However, to achieve the primary benefits of automating this part of decision-making, people must be able to trust the machine learning model's outputs. In order to enhance people's trust and promote appropriate reliance on the model, visualization techniques such as interactive model steering, performance analysis, model comparison, and uncertainty visualization have been proposed. In this study, we tested the effects of two uncertainty visualization techniques in a college admissions forecasting task, under two task difficulty levels, using Amazon's Mechanical Turk platform. Results show that (1) people's reliance on the model depends on the task difficulty and level of machine uncertainty and (2) ordinal forms of expressing model uncertainty are more likely to calibrate model usage behavior. These outcomes emphasize that reliance on decision support tools can depend on the cognitive accessibility of the visualization technique and perceptions of model performance and task difficulty.